You know that uneasy feeling when technology moves faster than our ability to secure it? That's 2026 in cybersecurity.

Artificial intelligence promised to revolutionise defence. Instead, attackers got there first. They're using the same large language models that power your Copilot and ChatGPT to write malware, automate reconnaissance, and evade detection.

This isn't science fiction. It's happening right now.

The Numbers Don't Lie

According to the 2026 CrowdStrike Global Threat Report, AI-enabled attacks surged 89% year-over-year. The average breakout time – how long it takes an attacker to move from initial compromise to lateral movement – fell to just 29 minutes, a 65% increase in speed from 2024. The fastest observed breakout occurred in just 27 seconds. In one intrusion, data exfiltration began within four minutes of initial access.

As IBM X-Force observed, attacks against public-facing applications increased 44%, driven by missing authentication controls and AI‑powered vulnerability discovery. Attackers aren't inventing new techniques. They're using AI to accelerate existing ones.

AI-Generated Malware: The New Normal

McAfee Labs uncovered a widespread campaign hiding inside fake downloads for game mods, AI tools, drivers, and trading utilities. Across 443 malicious ZIP files, McAfee identified 48 malicious DLL variants used to infect devices. The campaign was spread through Discord, SourceForge, and FOSSHub. What made it notable? Some parts appeared to have been built with help from large language models. Certain scripts contained explanatory comments and patterns that strongly suggested LLM assistance.

The malware was distributed through platforms users trust, making detection harder. Once executed, infected devices could be used to mine cryptocurrency or receive more malicious payloads.

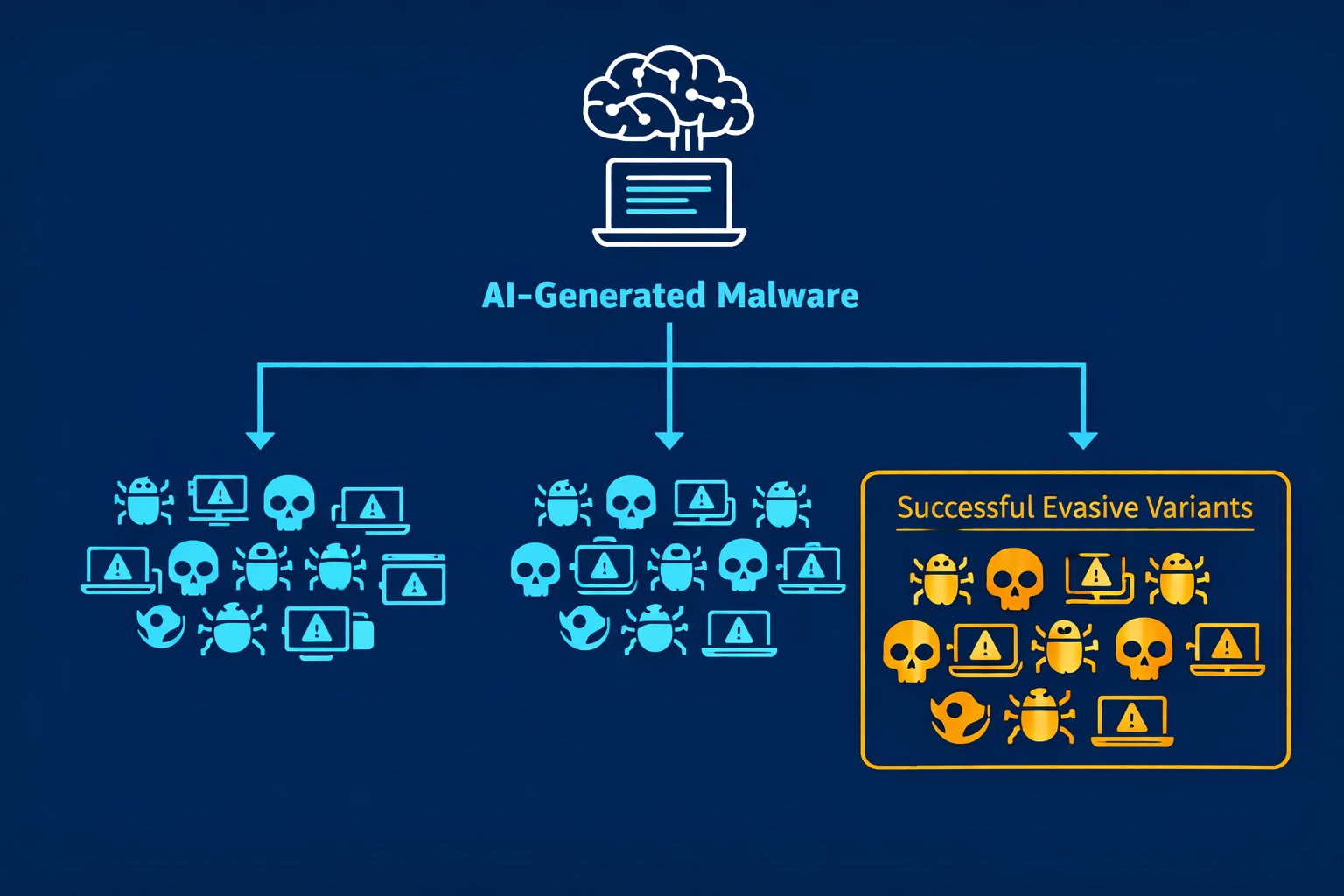

How GenAI Enables Polymorphic Malware

Traditional polymorphic malware changes its structure through pre‑defined routines. Generative AI takes this to another level. Instead of relying on simple automated changes, LLMs can intelligently rewrite and reshape malicious code, producing countless variations that differ in logic, structure, and behaviour while still achieving the same objective – as demonstrated by the 48 distinct DLL variants in the McAfee campaign.

Attackers can now run automated evolution cycles: the AI generates thousands of unique malware variants, testing them against various security tools until a successful evasive variant is found. This enables adversaries to continuously produce new, undetectable samples much faster than human analysts can develop countermeasures.

Perhaps most concerning, GenAI acts as a development assistant, allowing less-skilled actors to generate complex code, translate malware components between programming languages, and troubleshoot scripts that previously required high-level expertise.

Prompt Injection: The New Attack Surface

AI systems are built to trust. That's their fundamental weakness.

The Center for Internet Security (CIS) released a report warning that prompt injection attacks are a serious and growing threat. Attackers can manipulate AI systems by hiding malicious instructions in documents, emails, websites, and other data that AI tools are allowed to access. Those hidden instructions can lead to stolen sensitive data, unauthorised system access, and disrupted operations.

As TJ Sayers, Senior Director of Threat Intelligence at CIS, put it: “This report makes clear that technical prompt injections aren't a theoretical problem; they're a real and immediate risk. As organisations race to adopt AI, attackers are finding novel ways to turn these tools against us.”

Real-World Prompt Injection Incidents

In April 2026, Microsoft patched CVE-2026-21520, an indirect prompt injection vulnerability in Copilot Studio (CVSS 7.5). According to VentureBeat's analysis, the vulnerability, discovered by Capsule Security, exploited the gap between a SharePoint form submission and the Copilot Studio agent's context window. An attacker could fill a public-facing comment field with a crafted payload that injected a fake system role message. Copilot Studio concatenated the malicious input directly with the agent's system instructions with no input sanitisation. The injected payload overrode the agent's original instructions, directing it to query connected SharePoint Lists for customer data and send that data via Outlook to an attacker-controlled email address.

Microsoft's own safety mechanisms flagged the request as suspicious. The data was exfiltrated anyway. The DLP never fired because the email was routed through a legitimate Outlook action that the system treated as an authorised operation.

As Carter Rees, VP of Artificial Intelligence at Reputation, explained: “The LLM cannot inherently distinguish between trusted instructions and untrusted retrieved data. It becomes a confused deputy acting on behalf of the attacker.”

OWASP classifies this pattern as ASI01: Agent Goal Hijack in their Top 10 for Agentic Applications.

The CrowdStrike report also notes that adversaries exploited legitimate GenAI tools at more than 90 organisations by injecting malicious prompts to generate commands for stealing credentials and cryptocurrency. Prompts, as the report notes, have become the new malware.

Deepfakes and AI-Powered Social Engineering

Generative AI has blurred the line between real and synthetic identities. Attackers are combining text generators like ChatGPT with advanced video tools to create convincing, dynamic impersonations for spear‑phishing and CEO fraud.

The CIS report warns that AI-generated deepfakes and fraudulent identities can be used to manipulate employees and bypass traditional verification methods. Simple text‑based phishing is being replaced by multi‑modal deception campaigns.

Nation-State and eCrime Actors Embrace AI

The CrowdStrike report details specific examples of AI adoption by threat actors:

- FANCY BEAR (Russia‑nexus) deployed LLM‑enabled malware (LAMEHUG) to automate reconnaissance and document collection.

- PUNK SPIDER (eCrime) used AI‑generated scripts to accelerate credential dumping and erase forensic evidence.

- FAMOUS CHOLLIMA (DPRK‑nexus) leveraged AI‑generated personas to scale insider operations.

AI is lowering the barrier for sophisticated attacks. Techniques once reserved for nation‑states are now within reach of smaller cybercriminal groups. As IBM notes, with AI‑driven coding tools accelerating software development while potentially introducing unvetted code, software supply chains face unprecedented pressure.

What You Can Do Right Now

The threat landscape has changed, but defenders aren't powerless. Here's what you need to do.

🔴 Immediate Actions (This Week)

- Audit AI tool usage. Take inventory of which GenAI tools your organisation uses, what data they access, and what permissions they have. The principle of least privilege applies to AI assistants too.

- Review prompt injection risks. If you're using agentic AI platforms (Copilot Studio, Agentforce, custom LLM agents), test for prompt injection vulnerabilities. Assume untrusted inputs can reach your model.

- Implement input validation for AI pipelines. Treat prompts and retrieved data as untrusted input. Sanitise, filter, and validate before they reach the model.

🟠 Short‑Term (This Month)

- Require human approval for high‑risk AI actions. CIS recommends that AI tools should not execute code, delete data, or make high‑impact changes without human review.

- Monitor for AI‑generated malware. Traditional signature‑based detection struggles with polymorphic AI‑generated variants. Invest in behaviour‑based detection and EDR solutions.

- Train employees on AI‑specific threats. CIS recommends training staff to recognise emerging AI‑related security risks, including prompt injection attacks and AI‑generated phishing.

- Adopt NIST's Cyber AI Profile. NIST's new framework (NIST IR 8596) layers AI‑specific priorities onto the Cybersecurity Framework 2.0. It's not a replacement – it's an extension to help integrate AI into your existing risk management.

🟢 Long‑Term Strategy

- Assume AI is both a tool and a target. Your defences must account for attackers using AI against you and targeting your AI systems.

- Deploy AI red teaming. Test your AI systems for vulnerabilities using dedicated AI security testing tools.

- Strengthen identity security. With billions of compromised credentials in circulation, strong authentication and continuous verification are non‑negotiable.

- Subscribe to threat intelligence feeds. The AI threat landscape evolves rapidly. Stay informed through CrowdStrike, IBM X‑Force, and industry reports.

References

- CrowdStrike 2026 Global Threat Report: AI Accelerates Adversaries and Reshapes the Attack Surface (February 2026)

- IBM X-Force 2026 Threat Intelligence Index – Executive Summary (February 2026)

- McAfee Labs: Hackers Are Using AI-Written Code to Spread Malware (March 2026)

- CIS Report: Prompt Injections – The Inherent Threat to Generative AI (April 2026)

- VentureBeat: Microsoft patched a Copilot Studio prompt injection. The data exfiltrated anyway (April 2026)

- Adversa AI: OWASP ASI01 – Agent Goal Hijack Technical Guide (April 2026)

- NIST Cyber AI Profile (NIST IR 8596) – Preliminary Draft

Related Reading on ThreatAft

- Microsoft Patches Actively Exploited SharePoint Zero-Day – CVE-2026-32201

- Critical Windows IKE RCE (CVE-2026-33824): Patch Your VPN Servers Now

- Three Microsoft Defender Zero-Days Actively Exploited; Two Still Unpatched

- ZionSiphon: The OT Malware That Could Poison Water Supplies

The Bottom Line

Weaponized AI isn't coming. It's already here.

Attackers are using AI to write malware, automate reconnaissance, and bypass traditional defences. The same tools that promised to revolutionise cybersecurity have been weaponized against us. The CrowdStrike report puts it bluntly: AI is both the accelerant and the target.

But defenders have AI too. The organisations that will survive this shift are those that embrace AI as a defensive tool while hardening their systems against AI‑powered attacks.

Don't wait for the next wave of AI‑generated ransomware. Audit your AI tool usage today. Limit what your AI assistants can access. And for goodness' sake, assume that any untrusted input reaching your LLM might be malicious.

Stay vigilant. The machines aren't taking over – but the people controlling them certainly are.

Written by: ThreatAft Security Team – Specialising in threat intelligence and AI security research.